Uncertainty, Behavior, and the Limits of Rationality

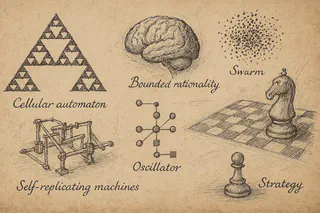

Better decisions often come from better data, and executives are right to rely on it. But in many real-world scenarios, decisions are made under uncertainty, in reaction to others… competitors, customers, regulators, teams. This is where 𝗯𝗲𝗵𝗮𝘃𝗶𝗼𝗿𝗮𝗹 𝘀𝗰𝗶𝗲𝗻𝗰𝗲 and the idea of 𝗯𝗼𝘂𝗻𝗱𝗲𝗱 𝗿𝗮𝘁𝗶𝗼𝗻𝗮𝗹𝗶𝘁𝘆 come in. People 𝘪𝘯𝘵𝘦𝘯𝘥 to make good decisions, but what’s “good” depends on what they know, how much time they have, and what they expect others might do.

In last night’s MTAC** session, we presented similar situations and asked graduate students to model and reconstruct the resulting phenomena from agents making decisions.

We began with thought exercises. No code at first, just their sense of how the systems might behave. When they shared their models, we asked: 𝘊𝘢𝘯 𝘵𝘩𝘢𝘵 𝘭𝘰𝘨𝘪𝘤 𝘣𝘦 𝘤𝘰𝘥𝘪𝘧𝘪𝘦𝘥? 𝘊𝘢𝘯 𝘪𝘵 𝘣𝘦 𝘦𝘹𝘱𝘳𝘦𝘴𝘴𝘦𝘥 𝘪𝘯 𝘵𝘩𝘦 𝘭𝘢𝘯𝘨𝘶𝘢𝘨𝘦 𝘰𝘧 𝘴𝘺𝘴𝘵𝘦𝘮𝘴—𝘵𝘩𝘦 𝘭𝘢𝘯𝘨𝘶𝘢𝘨𝘦 𝘰𝘧 𝘮𝘢𝘵𝘩𝘦𝘮𝘢𝘵𝘪𝘤𝘴? That shift from intuition to formalism is part of the real work of data scientists.

They all looked at the same scenarios. But every model came out different. That says something. Computational modeling is technical, but it’s also about framing. What matters? What can you ignore? What do you assume people will actually do?

Later, we explored 𝗪𝗼𝗹𝗳𝗿𝗮𝗺’𝘀 𝗰𝗲𝗹𝗹𝘂𝗹𝗮𝗿 𝗮𝘂𝘁𝗼𝗺𝗮𝘁𝗮 and 𝗖𝗼𝗻𝘄𝗮𝘆’𝘀 𝗚𝗮𝗺𝗲 𝗼𝗳 𝗟𝗶𝗳𝗲. A few simple rules produced behaviors that were complex and often counterintuitive.

These exercises remind us that many systems, from markets to organizations and even teams, emerge from local decisions shaped by interactions and reactions to others. We operate within constraints, using partial views of the whole. Modeling gives us a way to surface those interactions and ask better questions about how structure forms… not by design but through behaviors.

** 𝑀𝑜𝑑𝑒𝑙 𝑇ℎ𝑖𝑛𝑘𝑖𝑛𝑔, 𝐴𝑙𝑔𝑜𝑟𝑖𝑡ℎ𝑚𝑠, 𝑎𝑛𝑑 𝐶𝑜𝑚𝑝𝑢𝑡𝑎𝑡𝑖𝑜𝑛𝑠